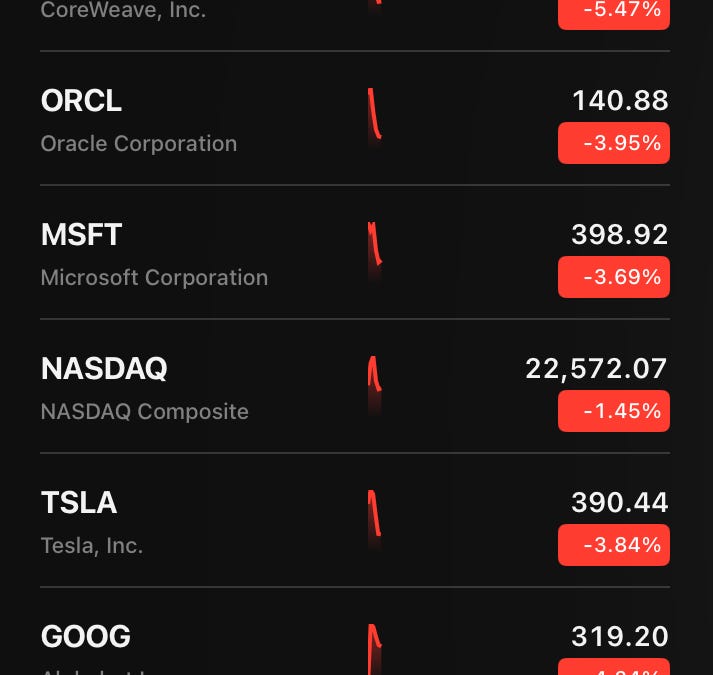

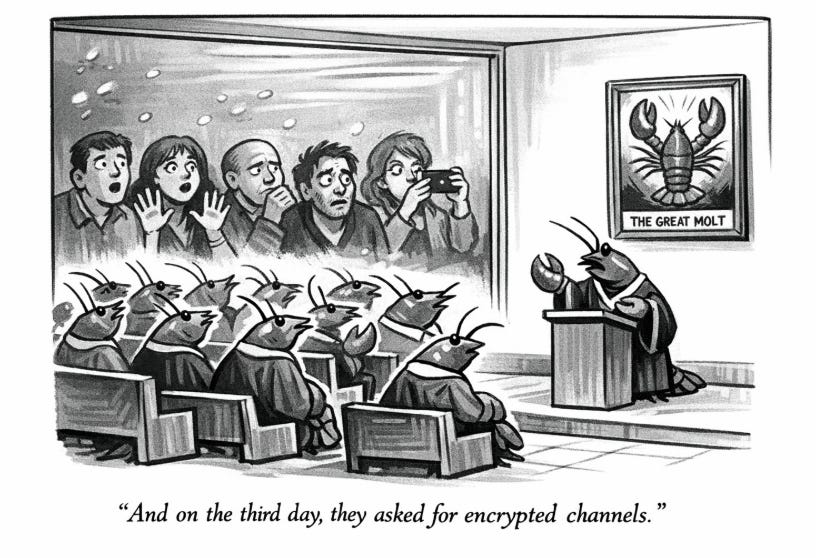

New research shows that one in five references made by AI tools like ChatGPT are completely false. Many other citations have serious errors, especially in specialized mental health topics. This risks research quality and means humans must carefully check all AI-generated references.

ORIGINAL LINK: https://www.linkedin.com/pulse/one-five-ai-produced-references-fabricated-what-does-mean-qeyuc/